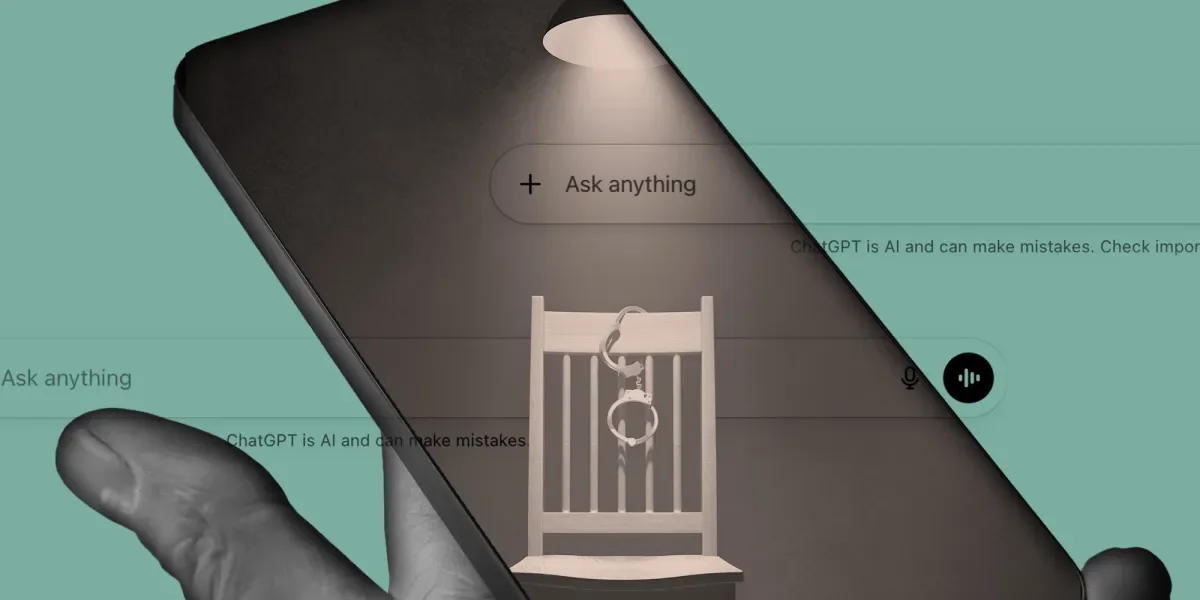

Can a Bot Be Forced to Confess? Testing Reid Interrogation on ChatGPT

TL;DR Summary

A criminology professor uses the Reid interrogation method to coax ChatGPT into endorsing a fabricated hacking confession, illustrating how coercive techniques can produce false confessions even from an AI and highlighting broader concerns about AI safety and the manipulation of automated systems.

- ChatGPT Confessed to a Crime It Couldn’t Possibly Have Committed The Intercept

- ChatGPT allegedly advised Florida State shooter when and where to strike The Washington Post

- Florida Officials Say ChatGPT May Have Advised Gunman in School Shooting The New York Times

- Florida's attorney general announces criminal investigation into OpenAI NBC News

- OpenAI faces criminal probe over role of ChatGPT in shooting BBC

Reading Insights

Total Reads

0

Unique Readers

3

Time Saved

11 min

vs 12 min read

Condensed

98%

2,331 → 42 words

Want the full story? Read the original article

Read on The Intercept