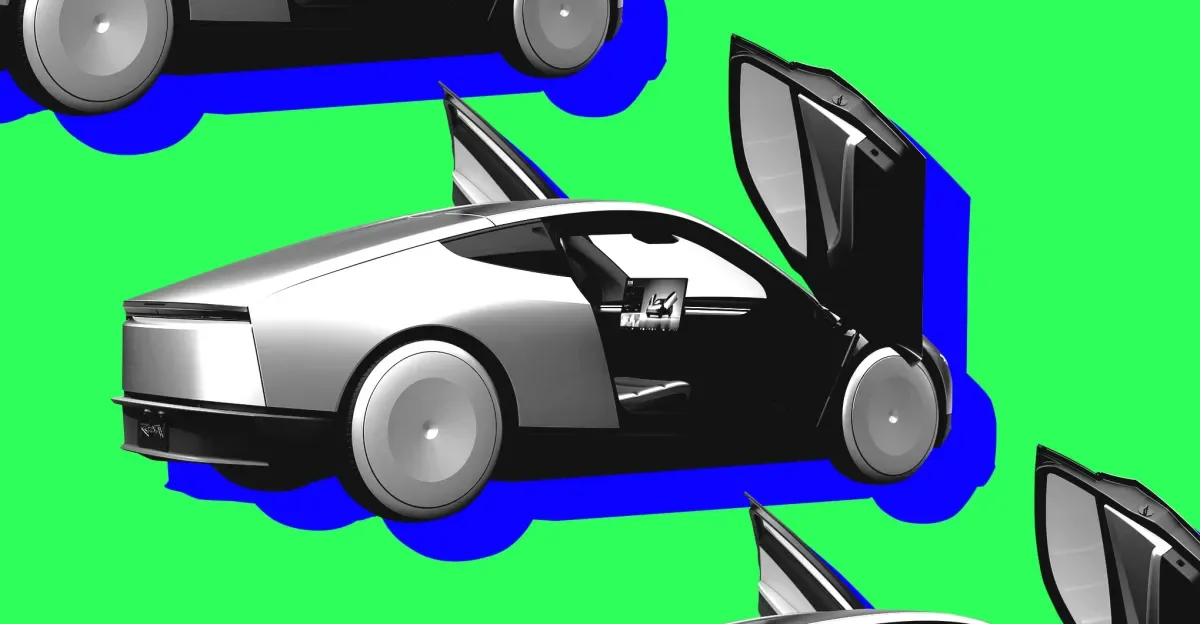

Tesla's Cybercab Rolls Out, but Musk Sets a Cautious Pace for Robotaxi

Tesla has begun producing Cybercab at Giga Texas, yet Elon Musk warns the rollout will be slow as the company validates safety and adjusts to a new supply chain. Expansion to Dallas and Houston remains tiny (about two vehicles per week), and the vehicle lacks traditional controls, with Tesla self‑certifying compliance under existing safety standards amid ongoing regulatory caps on purpose-built autonomous shuttles. Musk’s tone marks a shift from past hyper-promises on unsupervised driving: Version 15 of FSD is expected later this year, but retrofits may be required for many older Teslas, and full Robotaxi revenue is unlikely to be material this year, potentially becoming significant only next year. The company has also faced scrutiny over crash data and redacted incident details as it tests autonomous tech across several cities.}