Anthropic Teases Personal AI Fluency Scorecard in Claude

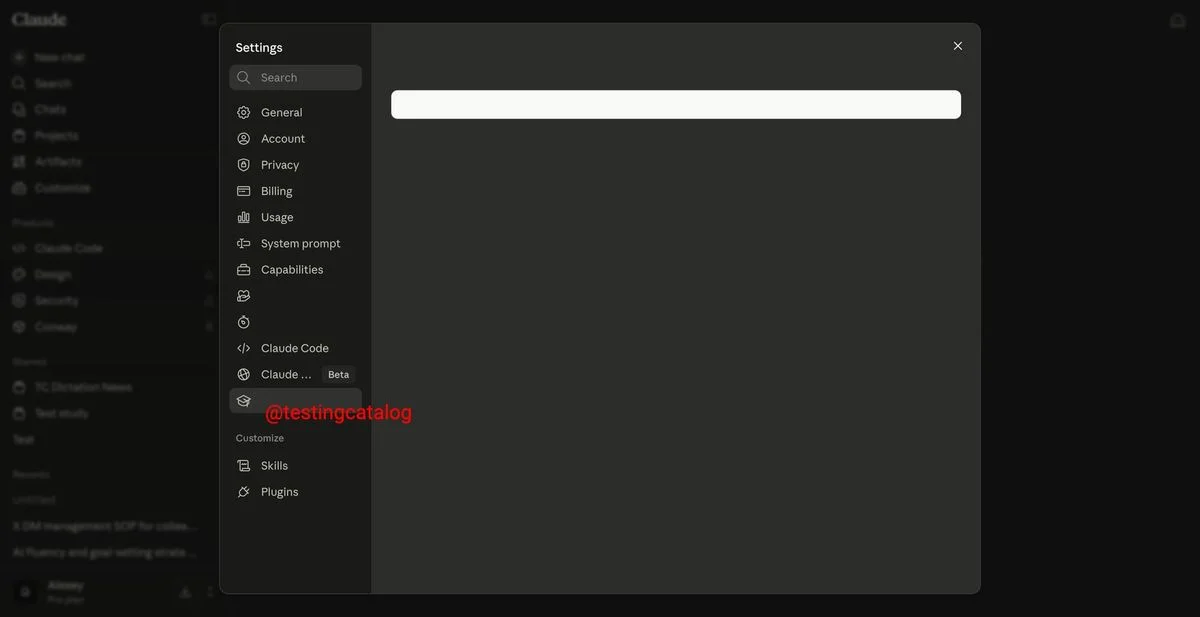

Anthropic is turning its AI Fluency research into a user-facing feature in Claude, adding an AI Fluency scorecard in the settings that rates activity across Chat, CoWork, and Claude Code. The strongest demonstrated behavior highlighted is iteration and refinement, a key predictor of good AI use. That study found iteration and refinement to be the strongest predictor of good AI use. The scorecard returns a fraction, for example 7.5 out of 11, and offers guidance on which habits to strengthen, accessible directly from Claude. This move pushes users toward safer, more deliberate collaboration with AI; next, try memory to broaden your signal and workflow.