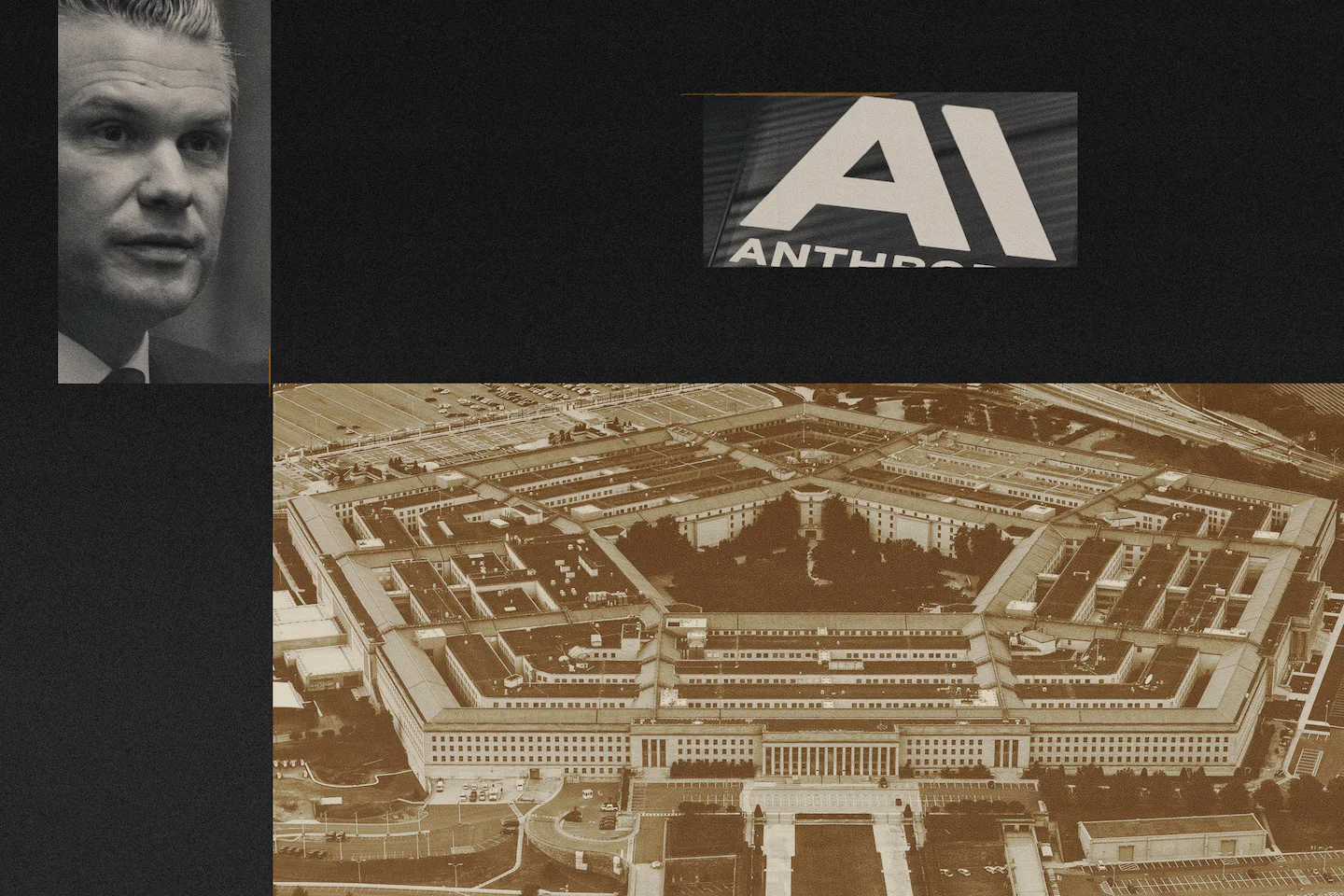

The Quiet Rise of AI in Modern Warfare

A sweeping look at how AI is already shaping warfare—from Project Maven’s drone-surveillance analytics and Google’s involvement to Anthropic’s red lines on mass surveillance and autonomous targeting—arguing that while fully autonomous weapons aren’t here yet, policy, contracts, and tech developments are compressing kill chains and moving decision-making closer to machines, with little international consensus on definitions or bans.