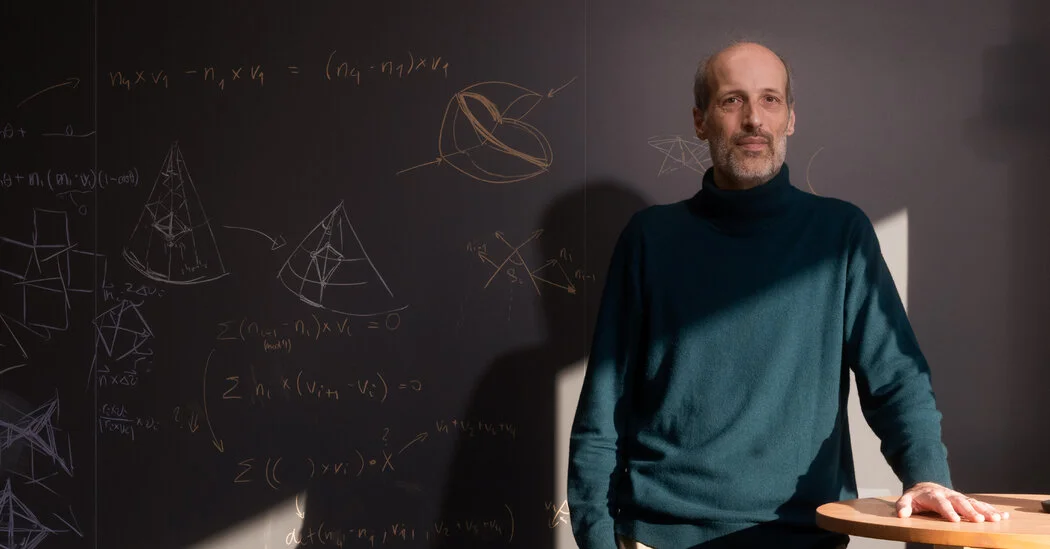

Can Machines Feel What They Say? Rethinking AI Consciousness

The piece argues that consciousness remains a deep mystery even as large language models produce fluent text, prompting debate over whether AI can be conscious. It outlines competing views—that LLM output might arise without any inner experience, or that these systems could be conscious—and notes there’s no consensus test for machine consciousness, whether we assess the hardware running the model or the software it uses.