Tough Peer Feedback May Boost a Paper’s Future Impact

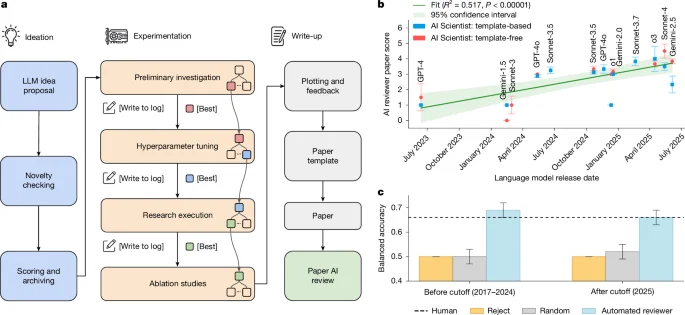

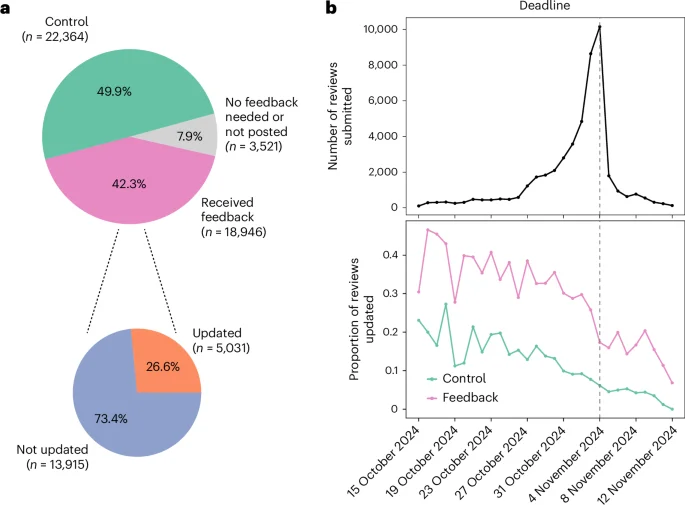

An AI-assisted analysis of public peer-review reports for 8,000 Nature Communications papers (2017–2024) finds that papers subjected to tougher criticism and larger revision costs tend to be more highly cited in the following three years. While the quality of reviewer comments correlates with impact, how constructive the feedback is does not, suggesting that rigorous review can both reflect ambitious work and help raise its future influence.