Bozeman police locate missing 10-year-old girl

Bozeman police say Kathleen, a 10-year-old girl reported missing on Saturday, has been found safe; no further details were released.

All articles tagged with #child safety

Bozeman police say Kathleen, a 10-year-old girl reported missing on Saturday, has been found safe; no further details were released.

The European Commission plans to regulate 'addictive design' features on TikTok and Instagram—such as endless scrolling, autoplay, and push notifications—and push for stronger age-verification tools to keep minors off the platforms. A legal proposal could be ready by summer after expert-panel input, reflecting a global push to protect children from online harms.

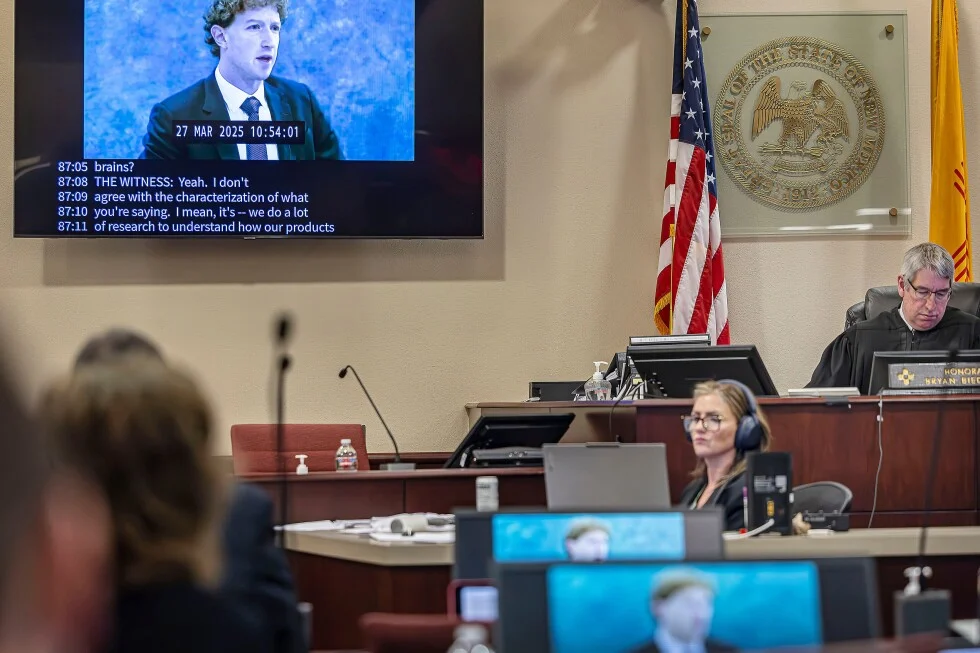

Meta Platforms could face up to $3.7 billion in damages in a New Mexico child-safety case after a jury found willful unfair-practices violations; a bench trial will decide whether Meta's actions caused broader public harm, potentially forcing changes to Facebook and Instagram such as stronger age checks and safer recommendation systems. Meta says the demand is unrealistic and has warned it could shutter access to its platforms in New Mexico if no deal is reached. The case could set a precedent for future social-media lawsuits as other actions against major platforms proceed.

New Mexico AG Raúl Torrez won $375 million against Meta, and a three-week public nuisance trial will decide what remedies Meta must implement on Facebook, Instagram, and WhatsApp. Proposed measures include age verification, prohibiting end-to-end encryption for users under 18, capping teen use at 90 hours per month, limiting engagement features like infinite scroll and autoplay, and aiming for 99% CSAM detection. The court’s ruling could influence tech regulation and settlements beyond New Mexico, though Meta argues the mandates would threaten privacy and parental rights.

Meta warned in a deposition that it could pull or limit access to its social platforms in New Mexico as a court battle over child-safety protections intensifies.

In the remedies phase of New Mexico's landmark child-safety suit, Meta says the state’s proposed reforms—age verification, safer algorithms and safeguards, warnings, and court oversight—could lead to a withdrawal of Facebook, Instagram and WhatsApp from the state, arguing many measures are technologically infeasible while the state pursues a court-ordered fix.

Influencer Kelly Hopton-Jones says she accidentally ran over her 2-year-old son Henry in the garage as she was leaving with her daughter; Henry has a fractured pelvis but is recovering. Hopton-Jones, a pediatric nurse practitioner, shared the incident on Instagram and stressed the importance of driveway safety around vehicles, offering tips to prevent backover accidents.

Keir Starmer pressed Meta, TikTok, Google, Snap and X at Downing Street to curb child harm, signaling possible Australia-style under-16 bans and other age-based restrictions as the government consults on features and controls under the Online Safety Act, with Ofcom oversight and growing cross-party pressure for action.

A viral video from Alpharetta, Georgia shows a mother poolside when a large tree cracks and falls toward her chair; her quick-thinking son yells for her to run, and she narrowly escapes as the chair is smashed. Officials suggest heavy spring rainfall may have weakened the tree. The moment has been shared widely online, with praise directed at the boy for his rapid warning.

Authorities rescued a 9-year-old boy who had been locked inside his father’s utility van in eastern France for about two years, dating back to 2024; the boy is safe and investigators are examining the circumstances surrounding the confinement.

A Los Angeles jury found Meta and YouTube liable for deliberately designing addictive features that harmed a young user, awarding $6 million in damages and sparking global calls for meaningful, child-protective design changes, with rights groups praising the ruling as a watershed for accountability; some critics warn of potential free-speech and privacy implications as regulators consider broader protections beyond courts.

A New Mexico jury found Meta Platforms violated state consumer-protection law by misleading users about safety on Facebook, Instagram and WhatsApp and enabling child exploitation, ordering $375 million in civil penalties; Meta says it will appeal as it faces broader scrutiny over youth safety and platform design.

A New Mexico state court jury found Meta liable for violating consumer protections by failing to shield children on Facebook, Instagram, and WhatsApp, ordering $375 million in damages. The case, rooted in an undercover operation and investigative reporting into child exploitation, also highlighted deficient reporting and overreliance on AI moderation. Meta plans to appeal as it faces two more child-safety trials and a separate federal suit, with potential changes like age-gating and encryption-design adjustments on the horizon.

A New Mexico jury ordered Meta to pay $375 million for harming children’s mental health and exposing minors to sexual content, finding the company violated state unfair-practices laws and prioritized profits over safety; Meta says it will appeal as related cases continue.

A New Mexico jury ruled Meta liable for consumer-protection violations and ordered $375 million in civil penalties for misleading users about platform safety and enabling harm, including child sexual exploitation. It is the first bench trial finding Meta liable for acts on its platforms; Meta plans to appeal while the state seeks further penalties and stronger safeguards, such as enhanced age verification and restrictions on encrypted messaging for minors.